😺 Meta bought a social network run by bots

专属客服号

微信订阅号

大数据治理

全面提升数据价值

赋能业务提质增效

Welcome, humans.

So

Meta acquired an AI social network

where AI agents posted fake content, and the posts went viral anyway.

Dead internet theory go brrrrVVVRRROOOOm?!

ICYMI, the platform is called

Moltbook

. It was designed as a social network for AI agents to interact with each other. The problem (or depending on your perspective,

the opportunity

) was that users couldn't tell which posts were from bots and which were from people. The engagement numbers were apparently so impressive that Meta swooped in to acquire the company and bring its founders into the Superintelligence Labs team.

A social network full of fake posts that people can't stop engaging with. Meta must have felt right at home.

Say what you want about the strategy, but Meta is clearly betting that the future of social isn't human-to-human; it's human-to-agent-to-human. And honestly? They might be right.

But be honest: you know they’re just trying to sell ads to agents and cut out the OpenAI middleman. Classic, Meta!

Here’s what happened in AI today:

😻 When AI agents get real access to real systems, this NVIDIA security rule could save your company.

📰 Yann LeCun raised over $1B (Europe's largest-ever seed round) for a new AI lab building world models.

📰 The White House is preparing an executive order to cut all federal ties with Anthropic over "woke" AI safety guardrails.

🎓 NVIDIA's internal "Rule of Two" for keeping AI agents from wrecking your systems.

🧪 ChatGPT now creates interactive visual explanations for 70+ math and science concepts.

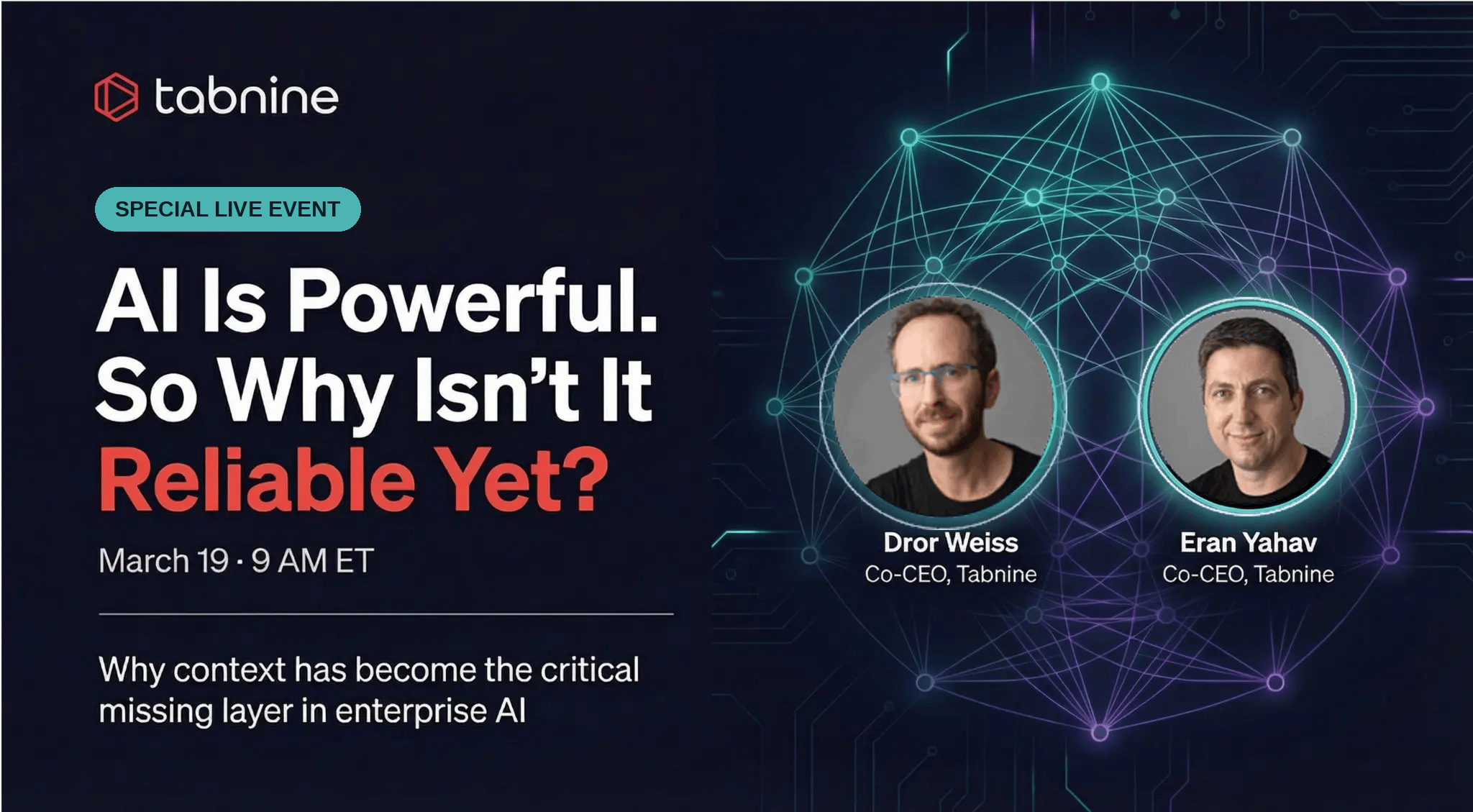

😻 Everyone's Building AI Agents. Almost Nobody's Securing Them.

So The Enterprise AI Strikes Back week has struck again, and this time, it’s Google with some new updates to Gemini in the Drive.

Google rolled Gemini into Docs, Sheets, Slides, and Drive

for its 3 billion Workspace users. Gemini now writes formulas, pulls data from the web, builds dashboards, and reformats entire presentations on command. It’s basically an agentic collaborator living inside the tools you use eight hours a day.

Meanwhile, a wave of innovative new AI labs are building the infrastructure to make agents even more capable:

Yann LeCun

raised $1B for

AMI Labs

to build AI that understands the physical world through world models, persistent memory, and planning.

Jürgen Schmidhuber

, had

some thoughts

about whose idea that was.

Mira Murati's Thinking Machines Lab

signed a

gigawatt-scale strategic partnership with NVIDIA

for next-gen Vera Rubin systems, a deal big enough to quiet skeptics who questioned whether the startup had substance beyond its famous founder.

Andrej Karpathy

let an autonomous Claude agent run overnight on his codebase. It

discovered ~20 improvements

and committed them all; no human review required.

So who's thinking about security here?

We

talked to Microsoft about exactly this

, so we know they are. But also, actually, so is NVIDIA. On the

Latent Space podcast

, NVIDIA's Brev team shared their internal "Rule of Two":

AI agents can do three things (access files, access the internet, execute code), and you should only ever let them do two at once.

Files + code execution? Fine, but kill internet access.

Internet + files? Lock down what the agent can do.

All three? That's how malware gets injected.

Why this matters:

Because agents aren't theoretical anymore. One just

hacked McKinsey's internal chatbot

in under two hours, gaining access to 46.5 million chat messages and 728,000 confidential client files. That wasn't a nation-state attack. It was one autonomous agent exploiting a SQL injection through an unauthenticated API.

NVIDIA says they also won't put company data in any model they don't control internally. They run their own models

on internal Dynamo clusters

.

Must be nice.

For the rest of us, that means checking your data agreements

before

letting an agent loose on proprietary information.

We just watched V1 of an upcoming podcast episode our editors just cooked up where we talk to Proton about AI and privacy that’ll make your hair stick up; definitely look out for that one coming soon!

Our take?

The organizations that move fastest with agents will be the ones that

draw smart boundaries

from day one, not those who ignore security or block everything.

As they say, “

don’t say no, say how.

”

FROM OUR PARTNERS

How teams plan to use MCP this year

Most teams building AI agents plan to adopt the Model Context Protocol (MCP) this year. Most of those same teams have

serious security concerns

about it.

To understand how teams are navigating this tradeoff, we surveyed hundreds of AI leaders building AI agents for

our first-ever state of agentic integrations report

.

Their top concerns?

70% worry about credential leaks and malicious servers

56% say MCP doesn’t support enterprise search well

51% report ambiguous tool definitions causing incorrect tool calls

Get your free copy to learn more.

🎓

AI Skill of the Day: The "Rule of Two" for Agent Security

Giving AI agents access to your systems? Here's a framework NVIDIA uses internally.

The rule:

Agents can do three things; access your files, access the internet, and execute code. Only let them do two at a time.

If an agent can read your files and run code, internet access is the vulnerability. Malware from the web runs against your private data. If it has internet and file access, you need to know the exact scope of what it can do.

How to apply it:

Sandbox first.

Run agents in an isolated environment before they touch your network. NVIDIA runs

OpenClaw on Brev

, a sandboxed VM completely off the corporate network.

Build CLIs, not raw API access.

A CLI

pre-defines the exact commands

an agent can run. An agent writing raw API calls? That's the agent deciding what's possible.

Involve security early.

NVIDIA's team

co-designed their sandboxing

rather than approving it after the fact.

Copy-paste prompt:

You are a security review assistant. I'm deploying an AI agent that will have access to [describe: files/internet/code execution]. Using the "Rule of Two" framework (agents should only have 2 of 3 capabilities: file access, internet access, code execution), identify which capability I should restrict, explain why, and suggest specific sandboxing measures for my setup.

Treats to Try

*

Live Event: AI Is Powerful. Why Isn’t It Reliable?

Dror Weiss and Eran Yahave, co-CEOs of Tabnine

explore why context has become the critical missing layer in enterprise AI.

Sign Up for the Live Event

ChatGPT

now creates interactive visual explanations for 70+ math and science concepts where you adjust variables in real-time graphs.

Fish Audio S2

generates expressive speech with sub-150 ms latency, multi-speaker in one pass, and inline emotion tags like [laugh] and [whisper] for rapid voice cloning (

GitHub

,

HF model

). Free to try.

Expo Agent

builds native iOS and Android apps from a prompt in React Native, SwiftUI, or Jetpack Compose, then compiles and deploys right from the browser. Join waitlist.

Claude Code

now includes built-in code review so you can ask it to review, suggest, and apply changes directly in your codebase.

Reflct

guides daily reflection and mood tracking with personalized AI journaling prompts.

Around the Horn

Adobe

debuted a new AI assistant built directly into Photoshop.

Zoom

introduced an AI-powered office suite and said AI avatars for meetings arrive this month.

YouTube expanded

its deepfake detection tool to cover politicians, government officials, and journalists.

OpenAI and Google DeepMind employees (including Jeff Dean)

filed an amicus brief

supporting Anthropic against the US government's supply-chain risk designation.

Legora

reached a $5.55B valuation as the AI legal-tech boom continues.

FROM OUR PARTNERS

Is your Python AI environment a security blind spot?

In

Snyk's new white paper,

discover the attack surfaces hiding in plain sight within the modern Python AI environment and learn how to regain visibility and control. You’ll learn how to surface toxic flows with MCP scan before they become incidents, generate an AI-BOM, detect shadow AI, and audit AI component risks to maintain continuous compliance.

📖

Midweek Wisdom:

Worth reading this week:

The Verge

: Laid-off lawyers, PhDs, and scientists are now doing precarious gig work training the exact AI replacing their careers.

Zvi Mowshowitz

breaks down Claude Code, Cowork, and Codex as the first real "AI coworkers," with a clear-eyed look at the autonomy vs. data loss tradeoff.

Martin Alderson

debunks the viral claim that Claude Code costs Anthropic $5K per user. The real number is closer to $500. The HN thread (296 comments) is worth the scroll.

WIRED

: Can AI kill the venture capitalist? When startups need less capital and AI automates diligence, the VC model starts looking fragile.

VC Cafe

: The researcher's new job is writing the spec, not running the experiment.

A Cat’s Commentary

|

|

P.S:

Before you go… have you subscribed to our YouTube Channel? If not, can you?

P.P.S:

Love the newsletter, but only want to get it once per week? Don’t unsubscribe—

update your preferences here

.